Содержание

Last year, Facebook announced the final migration to campaign budget optimization, scheduled for February 2020. However, after several delays in its implementation, in April, the company decided to leave advertisers the right to choose between setting a budget at the level of ad sets or campaigns, without making CBO the only option available.

In this article, we’ll talk more about what campaign budget optimization is, and when you should choose it by showing examples from the practice of Median ads.

How does it work?

Set Level Budget

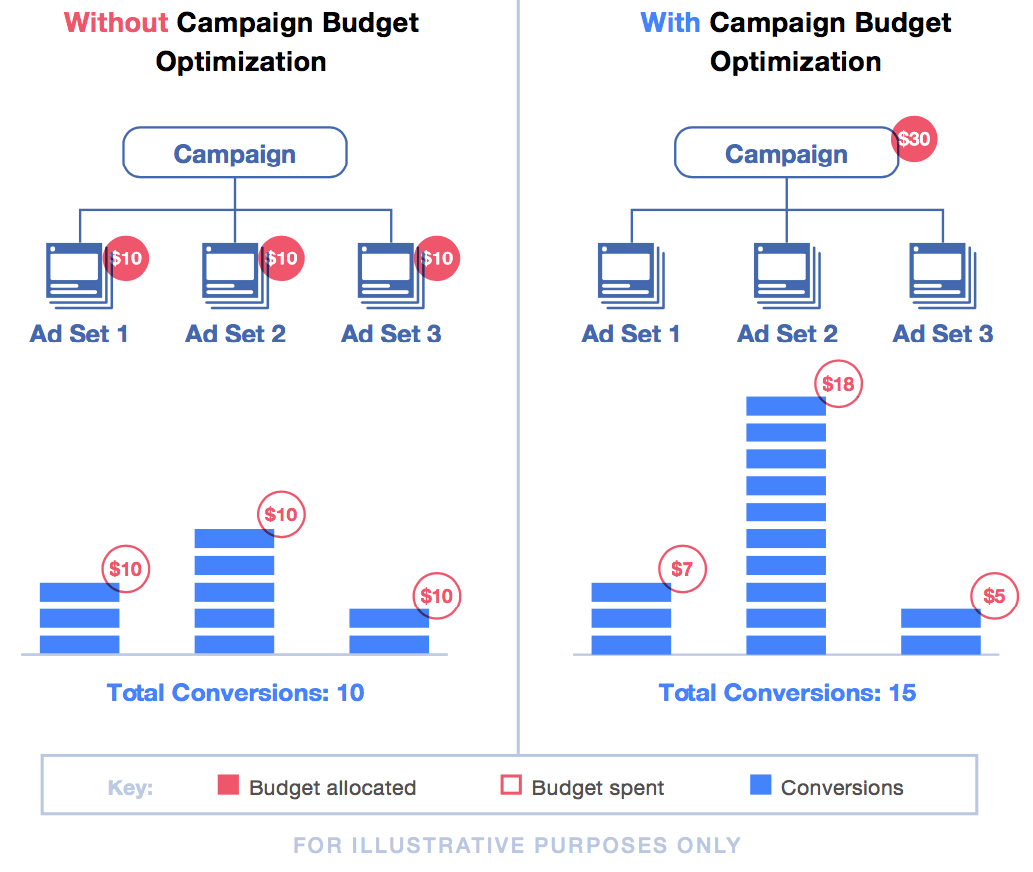

For example, you create an ad campaign with 2 ad sets in it, and each of them has 5 ads. A budget is selected for each ad set that works fully and collect its data history. Facebook pays attention to each ad set, but at the ad level impressions are distributed unequally and the most effective ads get more impressions. This looks like a kind of natural selection.

Campaign Level Budget

The budget will be common for all ad sets. The system will automatically distribute it in favor of the most effective ones. Data, collected by each set, will be summarized at the company level for further optimization. “Natural selection” will now take place not only at the ad level but also among sets. The most effective sets will work, and the less effective will not receive enough impressions or will not work at all.

CBO’s Impact On the Advertiser’s Work

We believe that Facebook is trying to simplify advertiser’s work by focusing on the following advantages:

✔ The automated process allows specialists to save time — you don’t need to shift budgets between ad sets manually;

✔ Avoiding the audience overlap. If one ad set has overlap with the audience of another set, then the budget can be spent only on one of these groups;

✔ Avoiding restarting the learning phase. Campaign budget optimization does not activate the learning phase when distributing the budget between sets;

✔ CBO allows you to find the most profitable opportunities for all ad sets;

✔ Summarizing data at the campaign level. For example, if there are 3 conversions in one set and 10 in another one, then the advertiser will see the whole picture at the campaign level (13 conversions), and Facebook will understand how to optimize the work of sets in the best way.

However, this update complicates the testing process. The objectivity of the test can only be assessed if two sets work completely.

How to conduct testing based on functional aspects during campaign budget optimization?

There are two options:

- Create as many campaigns as you need for different types of communications and targeting.

- Limit spends at the ad set level.

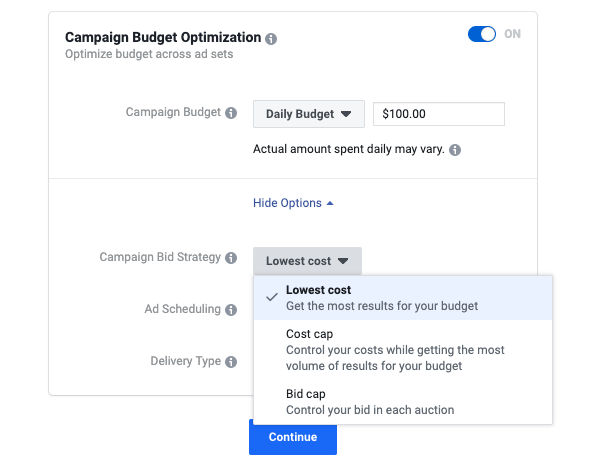

Facebook indicates that the spend limits will be useful if you choose the lowest cost bid strategy without a bid cap, and you don’t know the exact budget you need to reach the selected audience. In other cases, the network doesn’t recommend this strategy since it prevents the system’s flexibility to optimize the budget efficiently.

However, the spend limits also obstruct objective testing.

Let’s imagine that at the campaign level you put $60 and one of the ad sets spends $50. If you set a $30 spend limits for each of the sets, then the budget will be distributed but not necessarily to more effective sets. You can set a limit to give other sets the opportunity to work, but this is not objective testing, but only a “redistribution” of attention.

Campaign and ad set optimization, which one to choose?

Facebook recommends choosing CBO, as this option should provide a lower cost per conversion, but our experience demonstrates that we should not believe it unconditionally. In which situation what to choose?

We recommend choosing CBO if you have a small budget for promotion and there is no way to divide it into sets, but you need to run them all to understand which one will give the best result or to get at least some result. For example, you need to run 2 ad sets, and the total budget is $ 6. Of course, you can divide it equally, but it’s better to group the sets into one campaign and set the budget optimization at the campaign level, giving the system the opportunity to choose a more effective group. The algorithm can give the budget today to one ad set and get a conversion for $ 5.5, for example, tomorrow to another ad set and get a conversion for $ 4, and if you divide it equally from the beginning, then there will be no conversions today or tomorrow.

Here’s another example of how to use CBO to your advantage. We launched 4 campaigns in 4 countries. Each campaign had its own sets and budgets for each of set. One of the countries at the same time worked ineffectively. To improve results, we selected the most effective sets in each country, put them together in one campaign, and set CBO to get better results. After that, the country that showed the worst results started to work.

In addition, CBO is suitable for large budgets, if there are a desire and the ability to accumulate the data quickly. For example, we have a campaign in which 3 ad sets. If you set optimization at the ad set level, history and data will be collected separately for each set. One set can be optimized better and get 100 conversions, another worse and get 70 conversions, and the third one only 20. But in total they will not give 190 conversions, since each set will work on its own, spending its own budget. If you choose optimization at the campaign level, then the conversions of all groups will be summed up, and the optimization will work taking into account all 190 conversions. We know that more conversions help Facebook to better understand who to look for and optimize in order to show ads to the “right” people. Thus, the budget will be distributed by the algorithm independently among the groups in order of priority based on the principles of better/cheaper/more results.

If you have the opportunity to test budget optimization at the set level, we recommend choosing this option. This will allow you to experiment, duplicate, enable and disable sets, manually moving budgets at your discretion. If it is important to understand what exactly works: insight, interests, behavior, lookalike, etc., it is better to choose optimization at the level of ad sets. If you don’t care which of the groups or which ads will work, which insight or targeting, you want just to get the result at a given price, then feel free to choose CBO.

How to analyze the results?

Facebook pays attention to the fact that you shouldn’t analyze the effectiveness of campaign budget optimization by the costs and the average price for an optimization event for each set. The network advises looking at the total amount of data received at the campaign level.

If an ad set is not delivering, Facebook recommends increasing your bid cap or target cost, changing targeting and/or ad creatives, or choosing another optimization event.

It’s important to remember that CBO allows creating of not more than 70 ad sets.

Lookalike Audiences: Use Case From Median ads Team

As part of the promotion of an event, we added to the same campaign different saved audiences with detailed targeting. As a result: the first group worked well, the second one performed weaker, while the rest of them wasn’t delivered at all. Over time, when we received enough data, we created 3 lookalike audiences 0-1%, 1-2%, 2-3% and placed them in 3 new ad sets in the current ad campaign.

Based on the campaigns’ old history, Facebook didn’t give the opportunity to deliver ads in sets with lookalike audiences, spending only 10-20% of the budget in the very beginning and reducing expenses every day.

We realized that these lookalike audiences could have good potential, so we decided to disconnect them in the current ad set and create a new campaign with the same 3 lookalike ad sets. The set with LAL1-2% worked and became more profitable than the most effective set in the first active ad campaign. This is a case from our experience, and everyone will have their own data history from ad campaigns. However, it is important to remember that if you have hypotheses for testing and you need to validate them, then it’s better to create a new campaign and make full testing with a separate optimization point, as it was before at the level of each ad set.

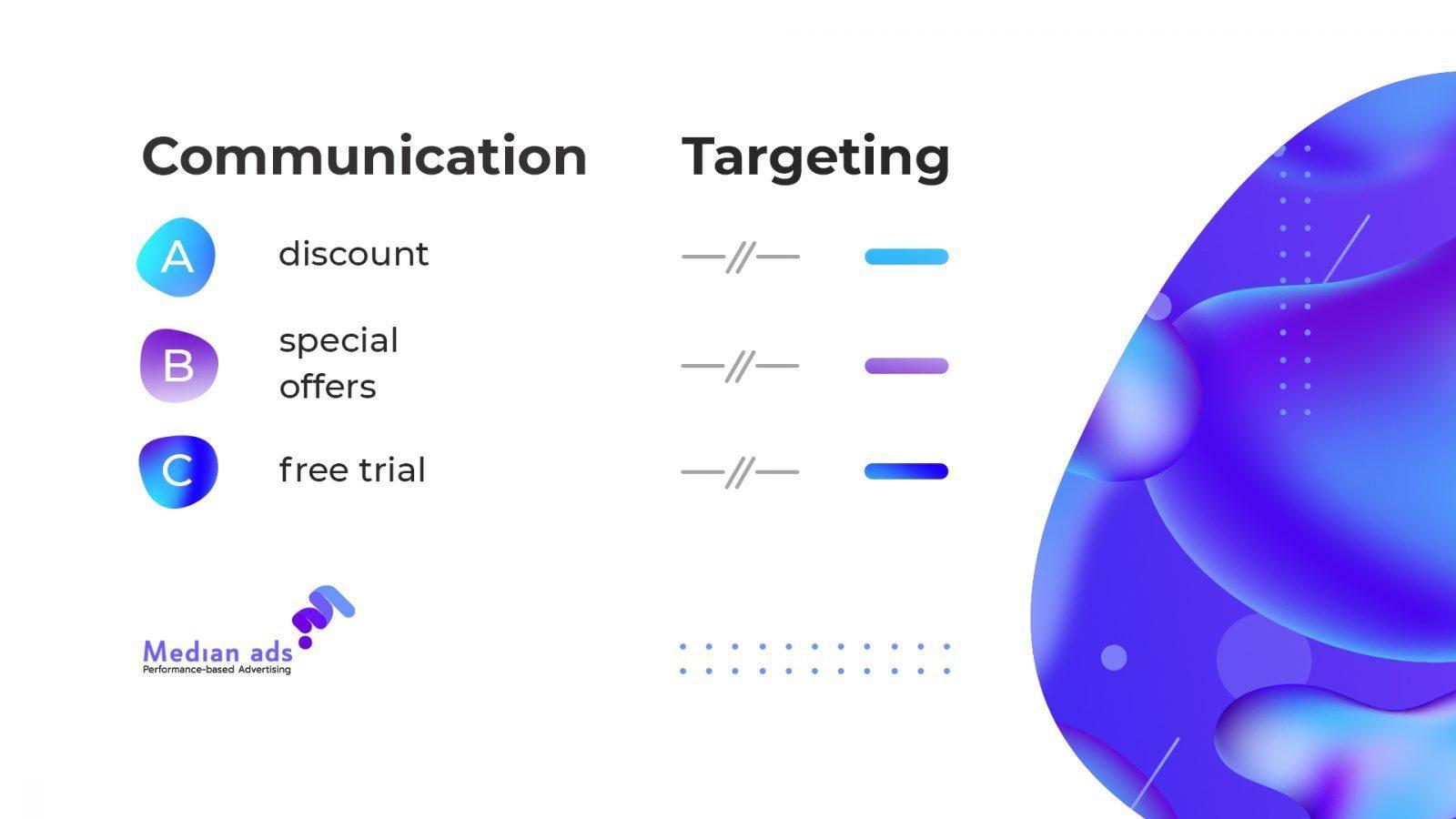

Use Case #2. Testing of Communications

In this example, we will visualize a situation. Let’s imagine that there are 3 different types of communications. In the first communication, we offer a discount, in the second one — special offers, and in the third one — the free trial period. In order to test them, we create 3 different campaigns for each communication.

⦿ Imagine that we used one targeting option and one saved audience for testing. The audience was duplicated in different campaigns for testing different communications. After we understood which communication works best, we turned off the rest of the campaigns to avoid an audience overlap.

However, it is important to analyze data in Delivery Insights. If an audience overlap is insignificant, we don’t turn off any of the ad sets since we deal with different communications, and even within the same targeting different people can see your ad.

⦿ If we would create many ad sets in each campaign and different targeting would give results, then we could not turn off the campaigns, as different audiences respond to different communications. However, if one of the communication’s costs per result is better than others, then you can leave this campaign to get results at the best price.

⦿ If you use only one communication, leave ads in one set and create one campaign. Facebook will help you to determine which ad copy and targeting will be most effective and distribute ad impressions and budget.

Summary

Since ad impressions optimization can be carried out at the campaign level, data from the results of each ad set will be combined. This will allow the system to quickly analyze the audience and stabilize the result. However, if you have hypotheses for testing different audiences and communications, you need to create many campaigns to get attention to each test and to avoid pad impressions fall. During the testing period, we recommend setting a budget at the set level to optimize each targeting, which is different in each set. After that, when there is enough data, you can switch to CBO and get an additional opportunity to lower the cost of the result.

For more qualified advertisers, the migration to CBO will require more time to work with the structure of advertising campaigns. Specialists who have just started working with Facebook ads will get some help. Facebook will independently distribute the budget to campaigns that work more efficiently. However, if you create only one ad campaign and use only one approach, it’s impossible to find the most profitable result without testing.

Subscribe to our Messenger bot and Telegram channel to receive the most useful content about advertising on social networks. If you want to increase your skill in working with online advertising, apply for the Median School courses.

If you have found a spelling error, please, notify us by selecting that text and pressing Ctrl+Enter.