Содержание

Тестирование — это обязательный шаг для получения высокой результативности в рекламе. Тесты позволяют продвигаться в работе, опираясь на фактические данные от клиентов, а не догадки специалистов или владельцев бизнеса.

Для автоматизации тестов рекламных объявлений существует целый ряд сервисов, вроде AdEspresso. При этом у вас всегда есть возможность провести тестирование вручную и свести учет в табличке Excel.

Тестирование также может отличаться по количеству переменных — возможен запуск как мультивариантных, так и А/Б-тестов.

Всё ещё под вопросом у многих специалистов эффективность готового решения от Facebook для А/Б-тестов, которое носит название «сплит-тестирование». Пока ветераны рекламных кампаний остаются верными своим стратегиям, Facebook дорабатывают функционал сплит-тестирования для привлечения рекламодателей к этому инструменту.

Чтобы вы могли сделать собственный вывод, стоит ли вам прибегать к готовому решению для сплит-тестов от Facebook, мы максимально объективно опишем его возможности на актуальный момент и поделимся субъективным мнением — своим и приглашенных специалистов.

Пара слов о важности тестирования

Реклама — это работа с живыми людьми в динамике. Сегодня вам кажется, что вы нашли верный путь к сердцам людей, а завтра всё перевернулось с ног на голову.

Любой профессионал, прежде чем спускать крупные рекламные бюджеты, обязательно построит различные гипотезы для дальнейших тестов. Первые результаты и первые показатели — вот после чего вы сможете почувствовать почву под ногами и масштабировать свою рекламную активность.

Это можно увидеть даже на примере очень простых вещей — вроде продажи посуды. Потенциально ваши клиенты — это и владельцы служб кейтеринга, и частные кафе, и фуд-корты супермаркетов, и шеф-повара, и домохозяйки. А их скрытые потребности могут оказаться где угодно на отрезке от практический пользы продукта до визуальной красоты.

Предварительно, все ваши предположения строятся на данных из прошлого, результатах исследований и опросов, цифрах старых кампаний. Выполнив тестирование, вы становитесь уверенней в своих ожиданиях по конверсиям от той или иной аудитории.

Аудитория и офферы — это только начало того, что вы можете протестировать. Тестирование помогает любителям нестандартных решений искать неожиданные инсайты.

Не забывайте, что ваша базовая задача в рекламе — попасть во внимание со своим сообщением. Слепота и раздражение — ваши враги в равной степени.

Случай из жизни Median ads рассказывает COO Виктор Филоненко.

«В моей практике полученные результаты очень часто расходились с предварительными ожиданиями. Больше всего запомнился проект, в котором мы таргетировались на молодых людей с интересом к видео-играм и получили самые высокие показатели у креативов с ошибками в тексте.»

Как одновременно зацепить живого человека и не перегнуть палку? Одно слово — тестируйте.

Подробнее о готовом решении для сплит-тестирования от Facebook

До первого релиза сплит-тестирования от Facebook рекламодатели строили собственные тактики для проведения тестов. Большинство специалистов продолжает запускать тесты вручную.

С ручным тестированием можно и нужно строить собственную стратегию для получения ответа на ваш конкретный вопрос. Автоматизированное решение от Facebook не подразумевает продумывания тактики.

Сплит-тестирование от Facebook — это инструмент для тестирования вариантов с одной переменной на автопилоте. Он призван сделать запуск рекламы максимально простым, привлечь к нему больше рекламодателей, и зациклить их активность внутри платформы.

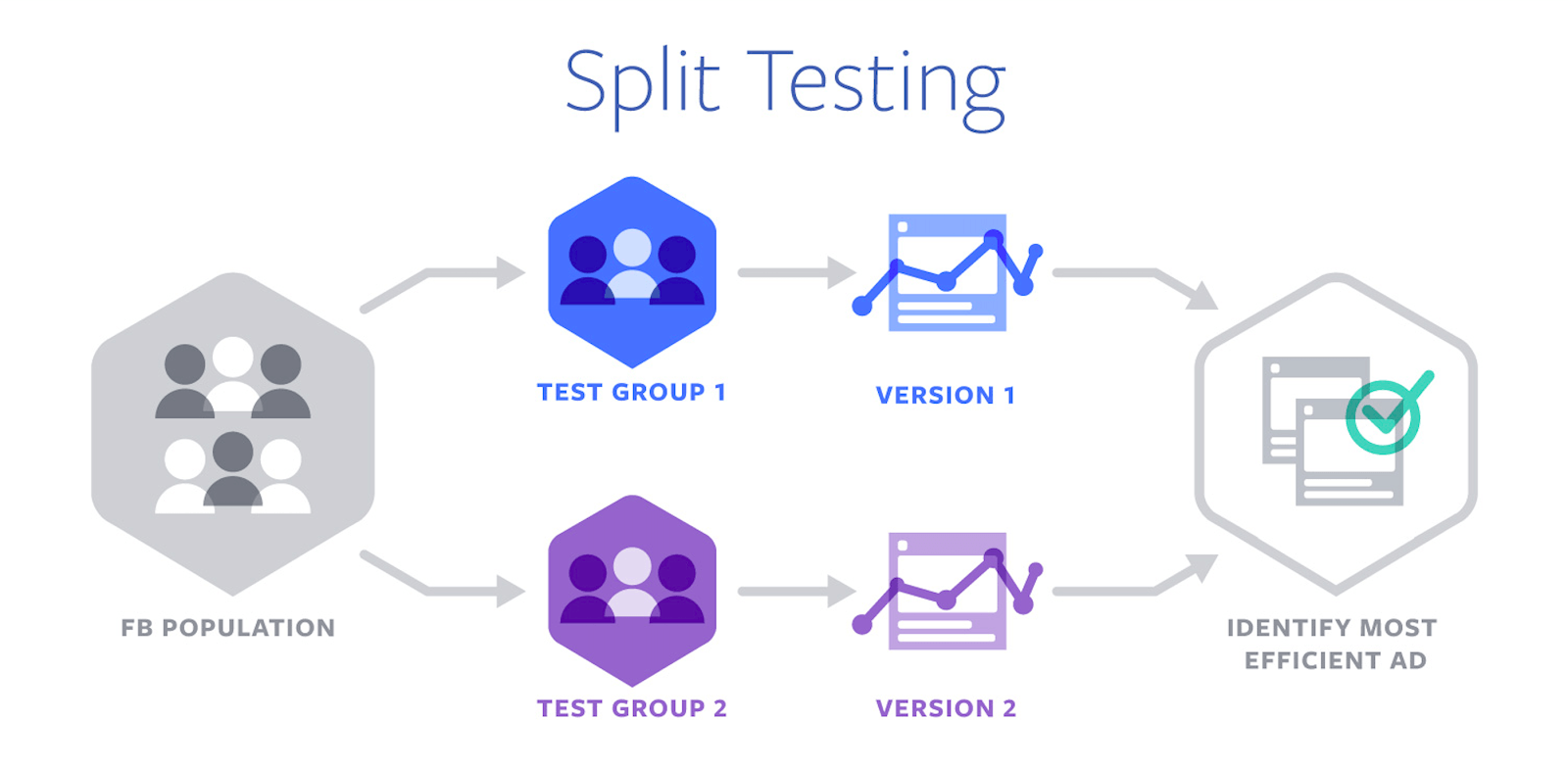

Как происходит процесс автоматического сплит-тестирования?

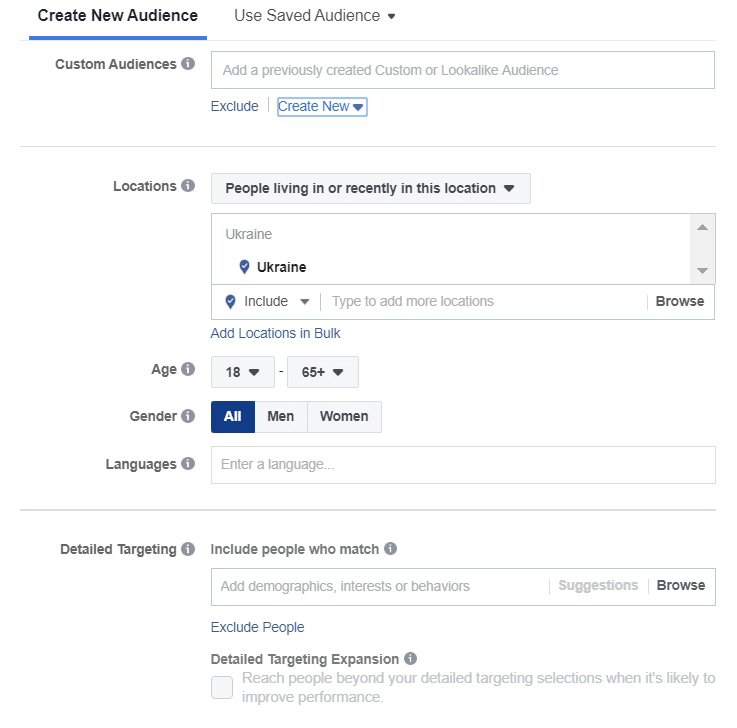

- Аудитория делится на группы без пересечения. Весь потенциальный охват случайным образом распределяется между группами рекламных объявлений. Разные варианты видят разные люди.

- В каждой группе есть только одна переменная — то, что вы хотите протестировать. Остальные параметры должны быть идентичны.

- Результативность групп измеряется согласно выбранной рекламной цели, группы сопоставляются для определения победителя.

- Вы получаете уведомление о завершении теста и можете использовать заготовку выигрышного варианта для запуска кампании или очередного теста.

Как запустить сплит-тест

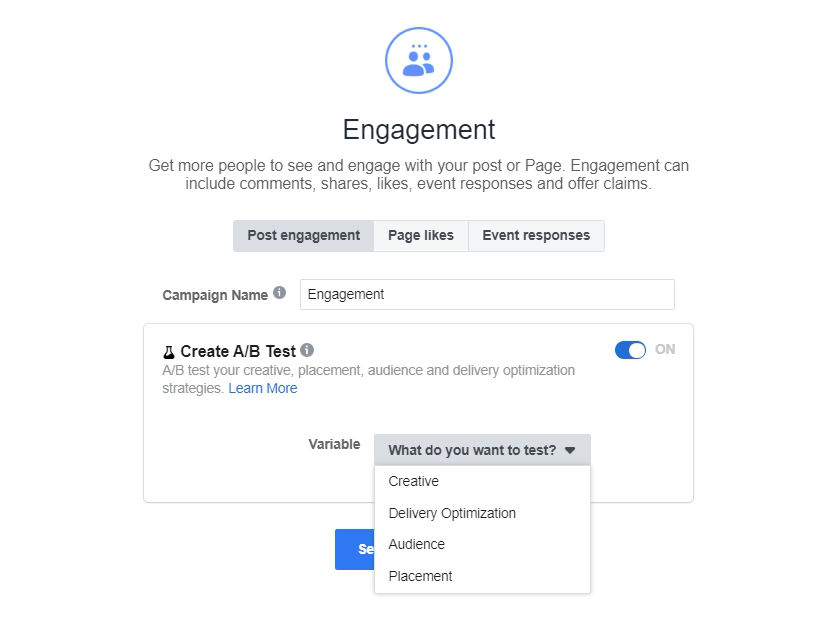

С последним обновлением, сплит-тестирование можно запустить простым проставлением галочки в окне выбора цели кампании.

Далее вы увидите дополнительные опции в настройках кампании. В первую очередь, появится окно выбора переменной.

Кроме последующих настроек, специфических для выбранной переменной, вы также можете настроить распределение бюджета между тестируемыми группами. По умолчанию, бюджет делится на равные части.

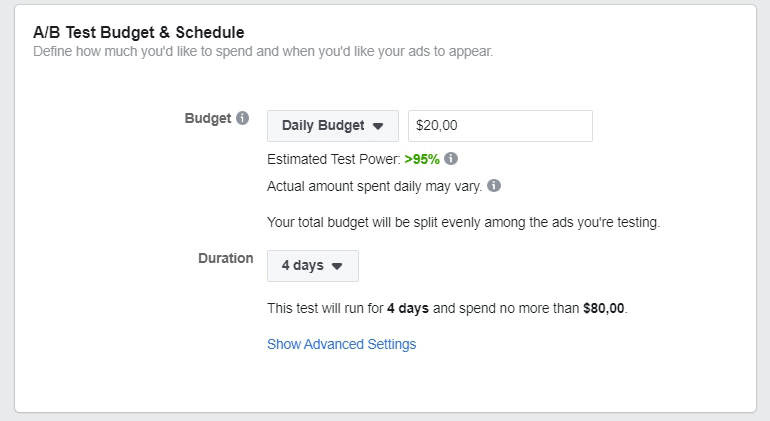

Работая со сплитами, важно помнить о «чувствительности теста». Чувствительность — это вероятность обнаружения разницы между объявлениями, если таковая имеется. Чем меньше денег вы готовы тратить на тест, тем меньше будет его чувствительность. Например, при дневном бюджете в $10, чувствительность равна 62%. Facebook рекомендует использовать бюджет, который позволит достигнуть чувствительности теста на уровне 80%, что повышает шансы на успешное тестирование.

Какие переменные можно протестировать?

Сейчас функционал сплит-тестирования предусматривает сравнение переменных в 4 категориях:

- Creative / Оформление. Если вы не уверены, какой заголовок или изображение сработает, — это ваш вариант.

![]()

- Placement / Плейсмент.

- Audience / Аудитория.

- Delivery optimization / Оптимизация доставки. В этом разделе вы также можете поставить или убрать предельную ставку, выбрать оплату за показы или за клики.

С релизом апрельского обновления, сплит-тестирование доступно для следующих рекламных целей: Трафик, Установки приложения, Генерация лидов, Конверсии, Просмотры видео, Продажи по каталогу, Охват и Вовлеченность.

Можно ли редактировать рекламу, запущенную в сплит-тесте?

Вносить изменения в уже запущенный сплит-тест — крайне нежелательно.

Даже если вы меняете не саму переменную, изменение других настроек в группе может нарушить «чистоту эксперимента». Проще говоря, выбор лучшего варианта не будет объективным. Например, вы тестируете разные аудитории, а потом меняете что-то в оформлении. Аудитория начинает реагировать поживее, а вы уже не можете сказать с уверенностью — это оптимизация или улучшенное оформление?

Если вы всё же меняете одну группу объявлений, то обязательно следует изменить и вторую. Несоответствия в группах после редактирования могут привести к остановке кампании.

Вместе с тем, есть изменения, которые в целом считаются допустимыми:

- На уровне объявлений можно исправить мелкую опечатку.

- На уровне кампании можно отредактировать бюджет или график показа.

Как оценить результаты сплит-теста

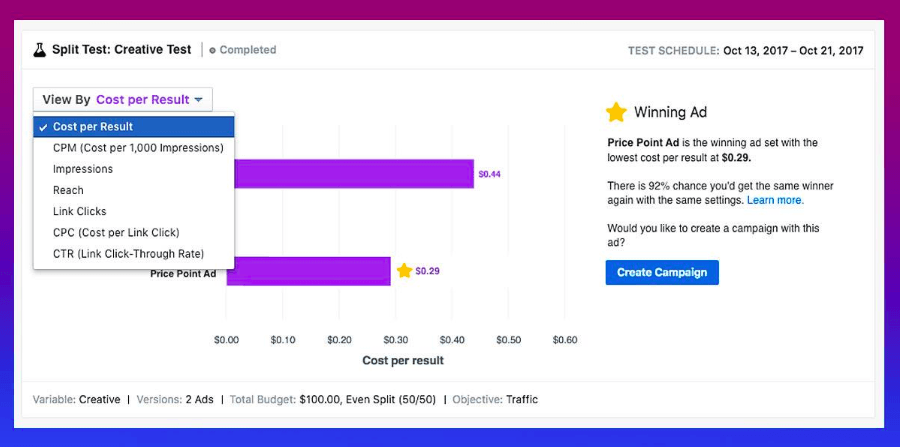

Как только победитель теста определен, вы получаете уведомление в Ads Manager и на почту.

В последнем обновлении Facebook также добавили новый график для отслеживания результативности активного сплит-теста.

Кроме победителя, полученной цены за результат и потраченной суммы, вы сможете увидеть показатель вероятности получить такого же победителя в следующий раз.

У победителя в сплит-тесте такой показатель должен быть выше 75%. При этом если вы запускаете в сплит-тесте 3, 4, 5 групп одновременно, то процентные показатели ниже 40%, 35% и 30% (соответственно количеству групп) будут свидетельствовать о близкой результативности без четкого фаворита.

Если вы получаете результаты теста с низкими показателями достоверности, Facebook рекомендует повторное тестирование с более продолжительным периодом показа или с более высоким бюджетом.

Что касается настроек бюджета, то здесь у системы есть свои рекомендации: сплит-тестирование требует времени от 3 до 14 дней, а рекомендуемый бюджет составляет, минимум, 440 USD.

Это ещё раз подводит нас к вопросу — стоит ли использовать инструмент Facebook вместо ручного тестирования разных групп рекламных объявлений?

Особенности сплит-тестирования через готовый функционал Facebook

Главное заявленное отличие сплит-теста от Facebook — отсутствие наложения аудитории.

Аудитория у тестируемых групп будет гарантировано разной и взаимоисключающей. Таким образом, вы не будете соревноваться в аукционах с самим собой, избегая роста цены за результат.

В то же время, может оказаться, что показ разных вариантов одному и тому же пользователю — как раз то, что вам нужно. Например, вы хотите, чтобы пользователь увидел оба варианта и своим выбором указал на объективного победителя. Инструмент такую возможность исключает: варианты А и Б увидят разные пользователи с общими характеристиками согласно выставленному таргетингу.

Вы всё ещё можете показывать одному и тому же пользователю разные месседжи для определения более эффективного на идентичной аудитории через построение соответствующей структуры рекламной кампании. При этом вам нужно покрыть достаточную долю аудитории, чтобы гарантировать высокую вероятность увидеть оба варианта.

Команда Median ads остается сторонником ручного тестирования. О технике построения таких тестов мы с удовольствием расскажем в следующих материалах. Подписывайтесь на наш блог и не забывайте оставлять нам отзывы в комментариях и сообщениях, чтобы мы подготовили максимально релевантную информацию для вас.

Что говорят о сплит-тестировании рекламодатели, которые уже его опробовали?

Мы провели небольшой опрос среди рекламодателей касательно их использования инструмента.

Ниже делимся мнениями специалистов, которые согласились предоставить нам свои комментарии.

Николас Ли из IHS Digital инструментом полностью доволен и всем советует:

«Мы, в компании, где я работаю, хотели протестировать ручные ставки на нашей лучшей аудитории. Установили время — 30 дней, и бюджет — $600 в день на одну группу рекламных объявлений. Мы запустили тест на 7 дней, и выключили, когда решили, что победитель нам понятен. Да, у сплит-теста есть минимальные значения для времени и расходов, но вы можете выключить его в любое время. Никто не закрывает вас в ловушке трат.»

«Обычно, когда вы запускаете две группы на ту же аудиторию, то рискуете вследствие наложения получить завышенную цену за результат или упустить показы из-за удаления из аукциона. Новый инструмент позволяет не беспокоиться о таких вещах, потому что мониторинг разделения аудитории по группам берет на себя система,» — продолжает Николас.

«Думаю, это зависит от размера аудитории,» — делится Тед Сандерс, маркетолог из Колорадо. — «На маленькиеаудитории инструмент не особо эффективен, но с крупными — приносит результаты получше. К такому выводу я пришел после того, как прослушал подкаст на Digital Marketer и позапускал тесты для своего работодателя.»

«Я считаю инструмент полезным, если бюджет достаточный… Со сплит-тестами можно получить ‘чистый’ результат без наложения. А теперь ещё и можно тестировать разные параметры, прежде чем переходить на крупные расходы. Впрочем, иногда бюджет не позволяет запустить сплит-тесты, и тогда ручное А/Б-тестирование играет важную роль», — отмечает Ларс Плето, запускающий в Германии рекламу по нишам автомобилей и фитнеса.

Петр Костюков, Digital Marketing Analyst в Profi.Travel и автор блога Targetboy, подтверждает опасения по расходам в своём комментарии:

«Я использовал этот инструмент только в первом релизе, и он мне не понравился в силу дороговизны CPM. С тех пор инструмент обновляли, но у меня пока не было повода его испытать. Если прагматично — думаю, что такое сплит-тестирование может понадобится только ecommerce с крупным числом объявлений.»

В целом, мы также получили достаточно рекомендаций запускать тесты вручную — «если знаете как». Среди преимуществ ручного тестирования можно назвать не только уровень расходов, но и удобство в масштабировании.

CEO Median ads Евгений Мокин добавляет:

«Изначально сплит-тестирование было доступно только в Ads Manager, когда в Power Editor оно отсутствовало. Теперь же, после их слияния, мы все проходим через предложение от Facebook запустить сплит-тестирование в процессе создания кампании.

Мне кажется, данный инструмент подойдет тем, кто ещё не до конца разобрался в построении структур рекламных кампаний. Если у вас уже есть понимание структур, то лучше выбирать ручное тестирование. Вы сможете не только вносить коррективы в тесты, но и начать масштабирование в любой момент, если на то уже есть соответствующие сигналы.»

О том, как строить кампании для ручного тестирования, мы обязательно напишем в наших будущих статьях. Поэтому подписывайтесь, следите на обновлениями и ничего не упускайте!

Если вы нашли ошибку, пожалуйста, выделите фрагмент текста и нажмите Ctrl+Enter.